We validate that it is feasible to detect DolphinAttack by classifying the audios using supported vector machine (SVM), and suggest to redesign voice controllable systems to be resilient to inaudible voice command attacks. We propose hardware and software defense solutions. By injecting a sequence of inaudible voice commands, we show a few proof-of-concept attacks, which include activating Siri to initiate a FaceTime call on iPhone, activating Google Now to switch the phone to the airplane mode, and even manipulating the navigation system in an Audi automobile.

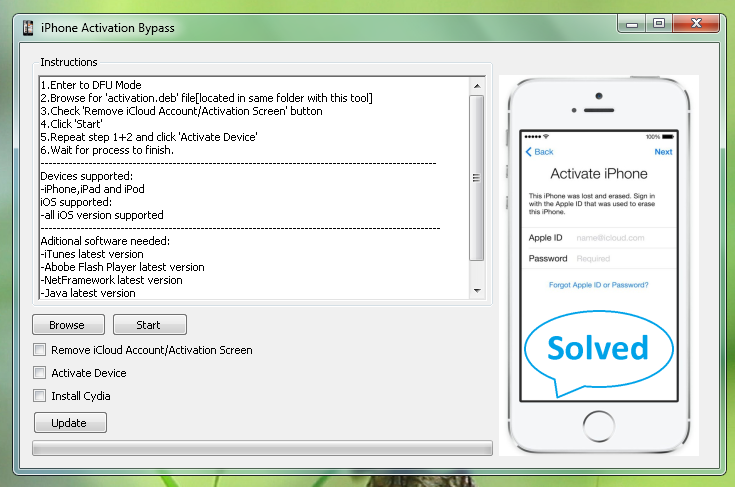

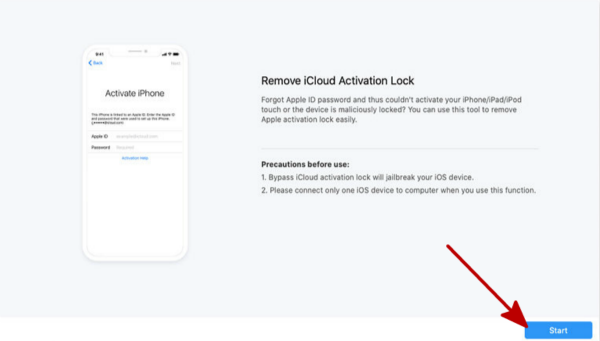

We validate DolphinAttack on popular speech recognition systems, including Siri, Google Now, Samsung S Voice, Huawei HiVoice, Cortana and Alexa. By leveraging the nonlinearity of the microphone circuits, the modulated low-frequency audio commands can be successfully demodulated, recovered, and more importantly interpreted by the speech recognition systems. Disable Mobile Device Management lock: Perform a. In this work, we design a completely inaudible attack, DolphinAttack, that modulates voice commands on ultrasonic carriers (e.g., f > 20 kHz) to achieve inaudibility. Remove all accounts: Turn off the Find my iPhone feature, or remove your Google account for Android phones. Hidden voice commands, though 'hidden', are nonetheless audible. Prior work on attacking VCS shows that the hidden voice commands that are incomprehensible to people can control the systems. Speech recognition (SR) systems such as Siri or Google Now have become an increasingly popular human-computer interaction method, and have turned various systems into voice controllable systems (VCS).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed